My Devoxx presentation on Real Options is now available on Slideshare:

|

||||||

|

Feb 25 Agile France 2013La conférence Agile France 2013 se tiendra les 23 et 24 mai 2013 dans le cadre agréable du Chalet de la Porte Jaune, près du chateau de Vincennes. Vous avez encore jusqu’au 2 mars pour envoyer vos “pitch” pour des sessions. Il ya déjà plusieures excellentes propositions. J’envoie ma proposition dans quelques instants. Qu’est-ce que vous attendez? The conférence Agile France 2013 will be held on May 23rd and 24th at the lovely Chalet de la Porte Jaune, close to the chateau de Vincennes. You have until March 2nd to send in a “pitch” for a session. We’ve already received many interesting session ideas. I’ll send my proposal in a few minutes. What are you waiting for? Ils sont fous, ces agilistes! Feb 25 Mini XP Day Benelux 2013

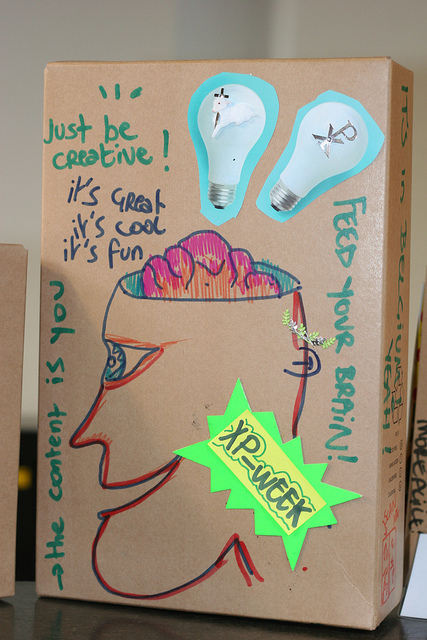

Mini XP Day reruns 12 of the best sessions from last year’s program in 3 parallel tracks. The conference takes place in Mechelen in Belgium (between Brussels and Antwerp). There’s room for 90 participants and the conference usually sells out. So register now to ensure you don’t miss out on this great event. Picture from the “Product Box” session at XP Days Benelux by Yves Hanoulle Feb 25 Agile Open Belgium 201322-23 March in Brussels, BelgiumAgile Open Belgium is an open space conference in the tradition of Agile Open conferences. You determine the subjects on the program. |

||||||

|

Copyright © 2025 Thinking for a Change - All Rights Reserved |

||||||